Technology

Alexander Waibel

Alexander Waibel is Professor of Computer Science at Carnegie Mellon University and the Karlsruhe Institute of Technology. A pioneer in machine translation, his work focuses on speech recognition, translation, and human-computer interaction, advancing cross-language communication tools for both consecutive and simultaneous interpreting.

What are the scientific differences between Physical AI and existing Large Language Models?

They originate from the same principles that have driven AI’s evolution. In particular, the shift from rule-based systems to neural networks—especially in speech and language—was crucial. Early AI relied on hand-written rules, simple “if this, then that” recipes, which struggled with real-world complexity. Modern systems use neural networks that learn from examples. A central idea is hidden representations, which enable learning and handling uncertainty: the model creates its own internal notes about patterns—such as accents, meanings, and context—helping it cope with ambiguity and noise. From the beginning, this approach also supported combining different kinds of input and outputs, such as pairing what you hear with what you see (lip movements or other visual cues), to improve understanding or to control actuators, objects, and movement in the physical world. Such hidden and learned knowledge is thus critical to representing and connecting an AI system to the physical world.

This notion of hidden representations is also what current multimodal AI builds on. We are talking about systems that learn jointly from text, audio, and images rather than from a single source.

Yes. This is huge. Neural models don’t care what information we feed them. They can learn from any useful features. And it must be emphasized that the availability of stronger hardware and much larger datasets is what now makes this approach truly effective.

So what’s really new?

Learning internal representations remains at the heart of today’s best AI, and combining modalities often works better than using one alone. This was understood 40 years ago. What is new is the environment: with more data and more powerful computers, the full potential of these advances has been unlocked. This matters because it leads to much better speech recognition and translations that handle noise, accents, and context, and to smarter assistants that can watch, listen, and read at the same time—a more robust and richer alternative to early AI methods.

But what was the role of recent scientific breakthroughs?

Ideas like attention and transformers were built on concepts from the 1980s and 1990s. Transformers, however, combine several of these concepts in clever ways that deliver significant performance gains in tasks such as speech recognition and machine translation. These advances were revolutionary in practice as they seemingly are only limited by compute and data (which we now have plentifully), but the underlying algorithms were evolutionary, often relying on algorithmic and engineering refinements rather than entirely new concepts.

What about future improvements? Will they come from more computing power and more data, even for the physical version of AI?

The answer is open. Current AI models rely heavily on large datasets and compute, but they may be approaching a performance plateau. This could happen because compute and learning may begin to outpace the growth of data on the internet. So, as a scientist, I’m thinking: why do we need so much data? We are already training models with way more data than a human learns in a lifetime. So how come we are arguing about computers being more intelligent than humans, while they need so much more data than humans? In a way, there is a flaw in the thinking that just more computation and more data will be enough. AI’s learning is not specific and focused enough. We need an AI that knows when it doesn’t know and can learn incrementally from local interaction, much like humans do. This present lack of adaptability and specialization is not sufficiently appreciated, because today’s AI systems can absorb and learn from so much more data than humans can. They appear superhuman despite such rigidity.

But what’s your personal opinion about this? Will the most important improvements come from more computing and data, or from new scientific breakthroughs?

In my opinion, a more human- like approach to learning— incremental and interactive updates—could be more efficient and energy-saving. It is not the mainstream approach, which relies on more data and computing. If we proceed like this, we miss some key ingredients. Current models don’t operate on large context, and they don’t really use the whole story—they just focus on a sentence. In understanding context and narrative, for example in translation, humans are still better. So we may ask: why don’t we just learn a broader context? There are technical reasons, since incremental knowledge must be woven

and harmonized carefully into existing knowledge. But more importantly, learning from individual persons and learning a particular context is generally not possible as it would violate our privacy. If you aggregate the information from many people, you will get an average narrative and not the individual context that needs to be understood in practice. Humans learn incrementally, interactively, and privately. They create their own representations and models every day. Incremental, continuous learning is a frontier, and we are working on such methods. Imagine a model that learns from you and creates personal knowledge that is kept locked up and private. We could separate local knowledge and centralized knowledge. Such methods are sometimes referred to as “edge computing.” At our labs, we call it “dreaming AI” or “sleeping AI”: learn during the day and digest during the night, for an incremental learning process. This could be a new form of AI.

Imminent Science Spotlight

Academic lectures for language enthusiasts

Curated monthly by our team of forward-thinking researchers, the latest in academic insights on language and technology: a deep dive into ideas on the brink of change about large language models (LLM), machine translation (MT), text-to-speech, and more.

Dive deeperCould this also solve the energy consumption problem for AI?

Correct. It is an AI that takes inspiration from nature. We are individuals, we are kids, we are grownups, we aggregate to some extent through communication, and we could think of an AI that does all those things to learn in different modalities in the physical world.

This is important for robotics, too…

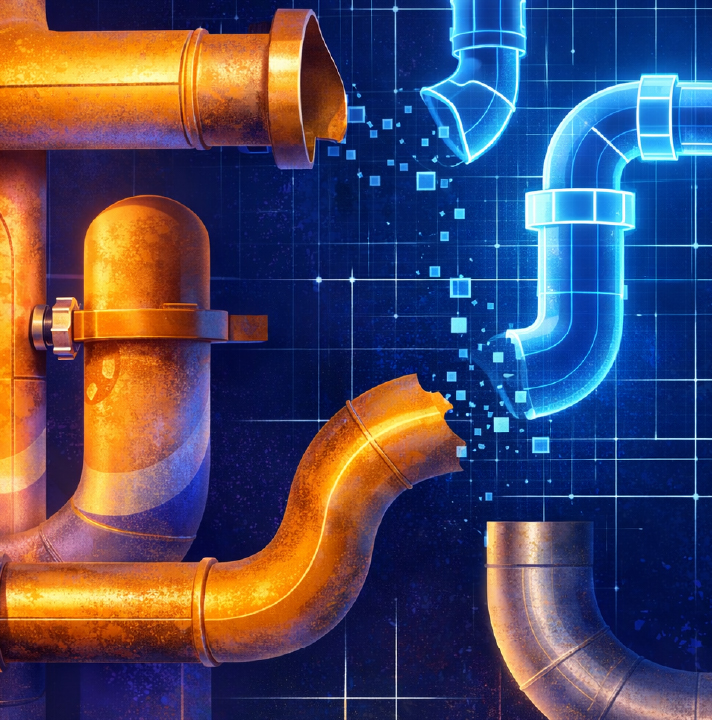

Indeed. So far, robotics is the slowest part of the AI revolution, because it needs all these mechanical parts that are difficult to build. And today’s robots are crude compared to humans: human hands are amazing. Human motion. Human self-repair and healing. While we can build AI that cannot be beat at chess or Go, we still cannot replace a plumber. And it will continue like that for quite a while. Plumbers don’t do quantum physics, but they make more money than many other jobs.

If you have an emergency, you pay anything. But why can’t this be automated, too? Simple. We are currently only in a digital environment, and so everything has to be brought into that realm first. The hardest part of AI playing chess is to move the pieces on the board. Physical devices rely on carefully engineered hardware and thus are quite inflexible. The plumber, however, has to deal with pipes that are different in every house, so you can’t normalize the job easily—you would need adaptation of the hardware to the different physical context, as well. It’s a bit like the rules of early AI: too much of a manual craft. But there are some exciting things on the horizon: for example, could we create intelligent hardware that reconfigures itself? AI and 3D printing may adapt things to different environments. And they may translate between physical spaces, just as we have learned to translate between languages in machine-to-machine communications.

What are the social implications of all this?

The implications are unprecedented. Exciting and scary at the same time. It will require a sober examination by scientists and policymakers alike, of what kind of society we hope to build, and what role AI should play for us, rather than getting caught up by a reckless gold rush. We, too, believe in humans!