Economy + Geopolitics

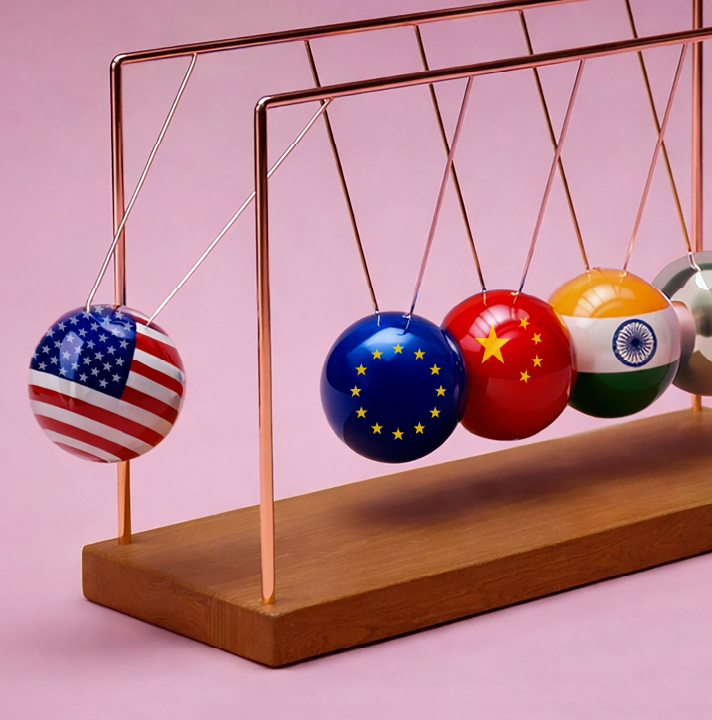

Applications of Physical Artificial Intelligence (AI) span multiple domains. For now, its most common use remains industrial manufacturing. China leads the field. The country deployed 189,000 industrial robots in 2014. By 2024, that number exceeded two million. Last year alone, China added 295,000 robots—a staggering figure when set against Germany’s 27,000, America’s 34,000, and the UK’s mere 2,500. Physical AI slashes costs and amplifies productivity. This advantage translates into market dominance.

Consider the automotive sector. Analysts link Physical AI deployment to Chinese manufacturers’ aggressive European expansion. BYD sold 47,000 vehicles across Europe in Q3 2025—a 238% increase year-on-year. Chinese brands captured 11.3% of Europe’s electric vehicle market in 2025, up from 4.8% the previous year. These figures fuel the international race to develop Physical AI. They also intensify pressure on the EU to relax its digital regulations in favor of innovation. The argument runs: if the EU cannot win the race, it must at least compete, and to do so it has to loosen (if not forgo) its regulations on digital and AI. I disagree entirely.

Being first with cutting-edge technology, i.e., innovation, does not guarantee success in adoption. Success requires transforming technology into a commodity: reliable, affordable, scalable, and user-friendly. This transformation demands adequate governance.

At first glance, the AI arms race is only about innovation. In reality, it concerns adoption and market share. Past innovations offer instructive parallels. Karl Benz registered the first car patent in Germany on January 29, 1886. Today, the world’s most common cars are not Mercedes-Benz. They are not even German. They are Toyotas—11.5 million produced annually.

The lesson is clear. Being first with cutting-edge technology, i.e., innovation, does not guarantee success in adoption. Success requires transforming technology into a commodity: reliable, affordable, scalable, and user-friendly. This transformation demands adequate governance.

The tension between innovation and regulation is well-trodden ground. Academia has long grappled with the Collingridge dilemma (Collingridge and Reeve 1986; Taddeo et al. 2024): govern too soon and you may stifle innovation; govern too late and the harms become entrenched and difficult to reverse. The dilemma sheds light on a crucial third element: adoption. Technology that proves unsafe or systematically violates user rights may not be adopted (one needs to use a conditional phrase here, given the wide adoption of social media. It should be said that in this case, privacy violations took time to become evident).

Innovation alone may not require governance (i.e., technical standards, best practices, supervisory authorities, and laws), but successful innovation—the kind that achieves adoption — does. This is good news for an EU that wants to leverage Physical AI. Physical AI demands hardware software integration, industrial expertise, and reliability over raw computational power. These are European strengths. Robotics and AI research have never been wholly separate. Some may recall Rodney Brooks’ subsumption architecture and behavior-based robotics (in the 90s), which challenged symbolic AI by prioritizing reactive sensor-motor coordination over high-level reasoning (Brooks 1986; 1999).

Imminent Research Report 2026

A journey through the next generation of AI —the moment when machines begin to learn from and interact with the real world in real time.

The ultimate resource for understanding the next phase of AI innovation. Written by a global, multidisciplinary community. Designed to navigate a world where machines mature, learn from experience, and retain memory—while humans remain responsible for how AI acts in the world, and language continues to be the primary way intelligence is created.

Get Your Copy NowYet over the past decade, robotics progressed steadily whilst AI achieved genuine breakthroughs. Physical AI integrates AI and robotics systemically. Opportunities and challenges of one will compound the other. Governance becomes essential—not optional. Consider the case of cybersecurity, for example. AI technologies are fragile and penetrable. Cyberattacks on AI differ fundamentally from attacks on other digital systems. They aim to manipulate system behavior, not merely disrupt it. Anthropic reported such an attack on Claude in November 2025. Attackers manipulated the system and exploited its agentic capabilities to execute sophisticated attacks on major companies’ digital infrastructure, exfiltrating data for espionage. Now imagine manipulating an AI system embedded in a battlefield on a drone or combat robot. The gravity requires no elaboration. Nudging AI behavior can require nothing more than cunning, prompting, or poisoning a fraction of training data. Research literature confirms this abundantly (Taddeo et al. 2019; Taddeo 2019; Tsamados et al. 2023). AI systems embody the Trojan horse with uncomfortable fidelity. The wider our adoption, the larger the attack surface. No silver bullet exists. But appropriate governance can mitigate risk. Security standards, mandates on implementation methods, requirements for human supervision—these measures reduce the likelihood that AI attacks produce catastrophic physical outcomes.

The EU is rightly preparing to compete on innovation. But it may take years, or decades, to be ready. In the meantime, it must not miss the greater opportunity: leading the competition for adoption, and particularly adoption of Physical AI. The EU can do this, precisely because of its comprehensive framework for digital governance— from GDPR to the AI Act—developed over the past decade. This is not just a strategic move, it’s also a duty for the EU to ensure that the adoption of new technologies transforms our societies into better versions of digital democracies, rather than being a systematic tool of erosion of democratic values and rights.

The urgent questions concern how we design this infrastructure and what purposes we adopt it for. The answers all begin with governance.

European firms can create distinctive value by transforming generic robots into sector-specific solutions for automotive, logistics, agriculture, and energy. This approach exploits Europe’s rich engineering heritage and deep understanding of complex industrial processes. Italy is a good example of this potential: its tradition of precision mechanics and collaborative robotics positions it to lead cognitive automation. This approach would also advance EU digital sovereignty, enabling control over critical automation technologies rather than dependence on foreign providers. It converts engineering excellence into a competitive advantage. The friction between innovation and regulation poses genuine problems. But framing AI and digital innovation through binary logic serves neither the technology sector nor liberal democracies. More importantly, this debate has become obsolete. Cutting-edge digital technologies continue to emerge. But digital infrastructure—AI, cloud computing, satellites, even smart hoovers—now forms the backbone of our societies. The urgent questions concern how we design this infrastructure and what purposes we adopt it for. The answers all begin with governance.

Mariarosaria Taddeo

Mariarosaria Taddeo is Professor of Digital Ethics and Defence Technologies at the University of Oxford and Grande Ufficiale al Merito della Repubblica Italiana. Her work focuses on the ethics of AI for national defence, cyber conflicts, and the ethics of digital innovation. Her research has been published in major journals and she has received multiple awards, including the 2010 Simon Award for Outstanding Research in Computing and Philosophy and the 2016 World Technology Award for Ethics.

References

Anthropic, “Disrupting the First Reported AI-Orchestrated Cyber Espionage Campaign,” Anthropic News, 13 November 2025.

Brooks, R. 1986. ‘A Robust Layered Control System for a Mobile Robot’. IEEE Journal on Robotics and Automation 2 (1): 14–23. https://doi.org/10.1109/JRA.1986.1087032.

Brooks, Rodney, ed. 1999. Cambrian Intelligence: The Early History of the New AI. MIT Press.

Collingridge, David, and Colin Reeve. 1986. Science Speaks to Power: The Role of Experts in Policy Making. Pinter.

Mads Jensen, “Physical AI: Europe’s Next Category Leaders,” SuperSeed Journal, November 1, 2025, https://www.superseed. com/journal/physical-ai-europes-next-category-leaders/

Maurizio Carmignani, “Robotica industriale, la Cina fa paura: l’Occidente è in ritardo,” Agenda Digitale, October 31, 2025,

SmartBuy, “Best Selling Car Brand in the World 2024: Toyota Dominates with 11.5M Sales,” Alibaba.com – SmartBuy, November 29, 2025.

Taddeo, Mariarosaria. 2019. ‘Three Ethical Challenges of Applications of Artificial Intelligence in Cybersecurity’. Minds and Machines 29 (2): 187–91. https://doi. org/10.1007/s11023-019-09504-8.

Taddeo, Mariarosaria, Alexander Blanchard, and Kate Pundyk. 2024. ‘Consider the Ethical Impacts of Quantum Technologies in Defence—before It’s Too Late’. Nature 634 (8035): 779–81. https://doi.org/10.1038/d41586-024-03376-4.

Taddeo, Mariarosaria, Tom McCutcheon, and Luciano Floridi. 2019. ‘Trusting Artificial Intelligence in Cybersecurity Is a Double-Edged Sword’. Nature Machine Intelligence 1 (12): 557–60. https://doi.org/10.1038/s42256-019-0109-1.

Tsamados, Andreas, Luciano Floridi, and Mariarosaria Taddeo. 2023. ‘The Cybersecurity Crisis of Artificial Intelligence: Unrestrained Adoption and Natural Language-Based Attacks’. SSRN Electronic Journal, ahead of print. https://doi. org/10.2139/ssrn.4578165.