Economy + Geopolitics, Trends

Originally published in the May issue of the Smart Signals newsletter.

Algorithms are deciding who gets benefits, flagging fraud, and drafting judicial sentences. AI applied to governance is no longer a future scenario — it is increasingly widespread, and its consequences are real.

In this issue, we dive deep into how AI is becoming a defining feature of the societies we live in. From macro trends — demographic pressure, market dynamics, governance frameworks — to the granular reality of specific countries and cases, we explore how AI in governance is deployed and what consequences it brings. Understanding this opens up a bigger question: AI is not only a technological matter, but a cultural, linguistic, and economic one.

From global trends to voices in the field, we explore AI deployment in governance and how the strategies we’re using today can help build the societies of tomorrow. Because navigating the future of business isn’t about waiting for the dust to settle; it’s about learning to see through it.

The takeaway

Governing with Artificial Intelligence

The State of Play and Way Forward in Core Government Functions

By OECD

Artificial intelligence is embedded in how public administrations operate, deliver services, and make decisions. Across 48 countries, thousands of AI projects are underway. Yet adoption remains uneven, implementation often stalls at the pilot stage, and trust between citizens and their governments is under pressure: only 39% of people express moderately high or greater trust in their national government.

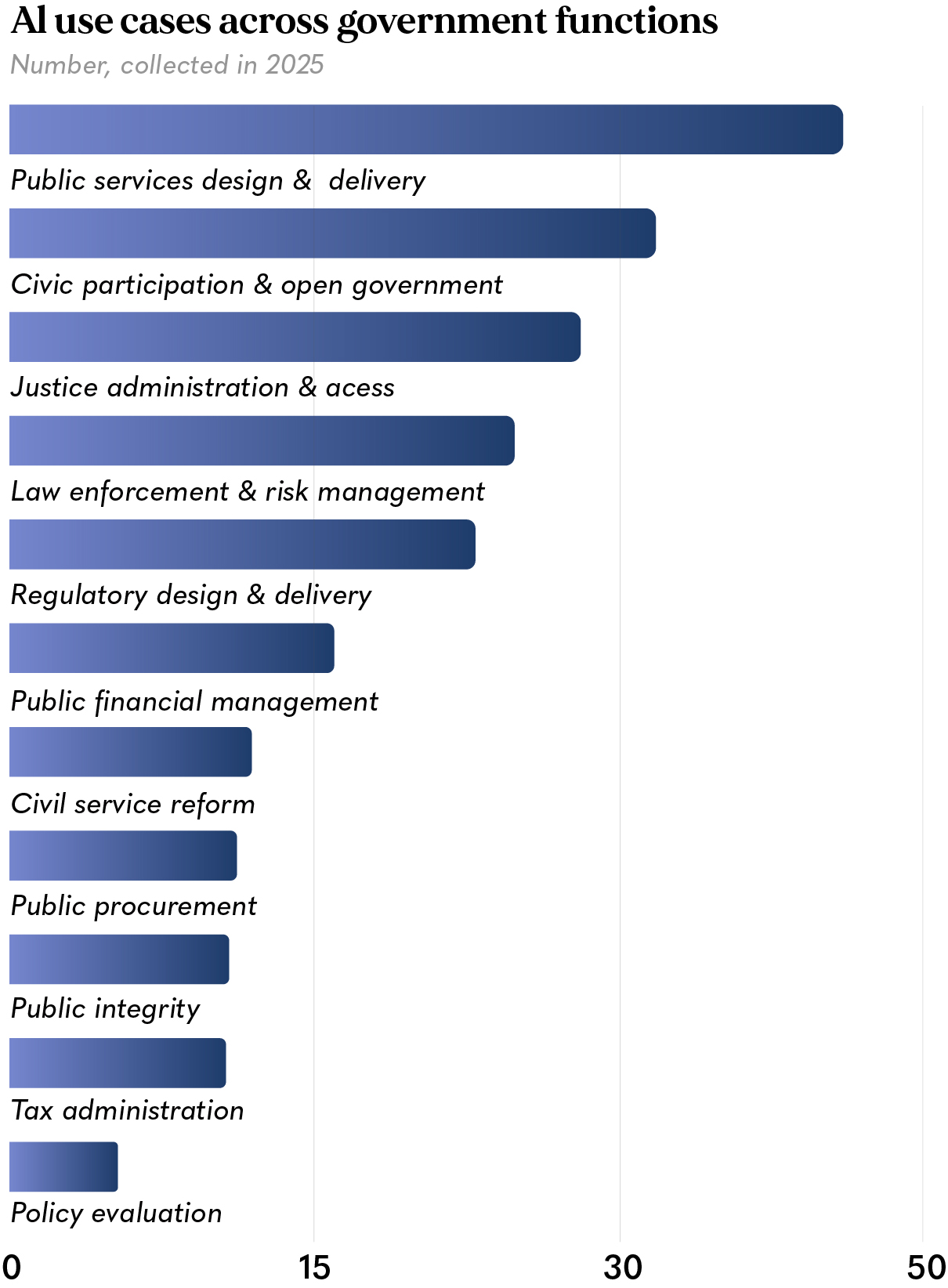

Drawing on the analysis of 200 AI use cases across 11 core government functions and dozens of governance frameworks, the OECD’s Governing with Artificial Intelligence (2025) maps where AI is gaining traction in the public sector, where it is failing to scale, and what trustworthy adoption actually requires.We read and analyzed all 306 pages of the report. Here are our takeaways.

1. Where Governments Are Using AI

The concentration at the top of the chart is not accidental. Public service delivery and civic participation are where political pressure is highest and where failures (or gains) are most visible — making them the natural first targets for AI investment. Policy evaluation and procurement, by contrast, involve complex institutional dynamics and accountability chains that make automation more politically sensitive.

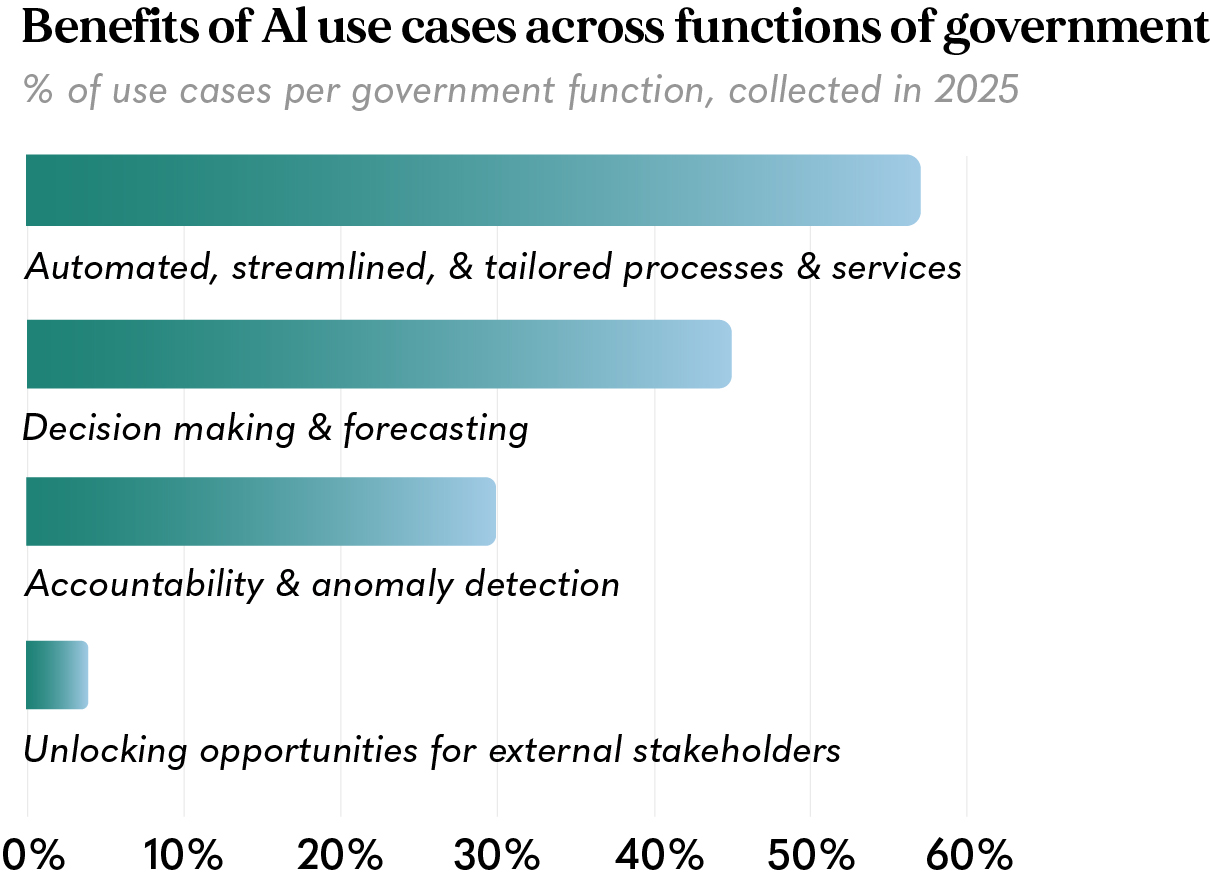

2. What Benefits Governments Are Pursuing

Governments are deploying AI overwhelmingly to serve themselves: to process faster, decide better, and detect fraud. Using AI as a genuine public good, one that gives citizens more agency over the services that affect them, remains almost entirely aspirational.

The near-absence of AI use cases oriented toward empowering external stakeholders — citizens, civil society, businesses — is the sharpest alert in this data. Efficiency gains are still being left on the table because, for all the potential benefits AI may bring, it also carries equally significant risks.

Meanwhile, the Alan Turing Institute estimates:

- 84% – of repetitive public service transactions will be automated by AI in the UK alone

- 1,200 – person-years of work will be saved annually

- 9% – represent actual documented use cases of automated routine tasks

Efficiency gains are being left on the table because, for all the potential benefits AI may bring, it also carries equally significant risks.

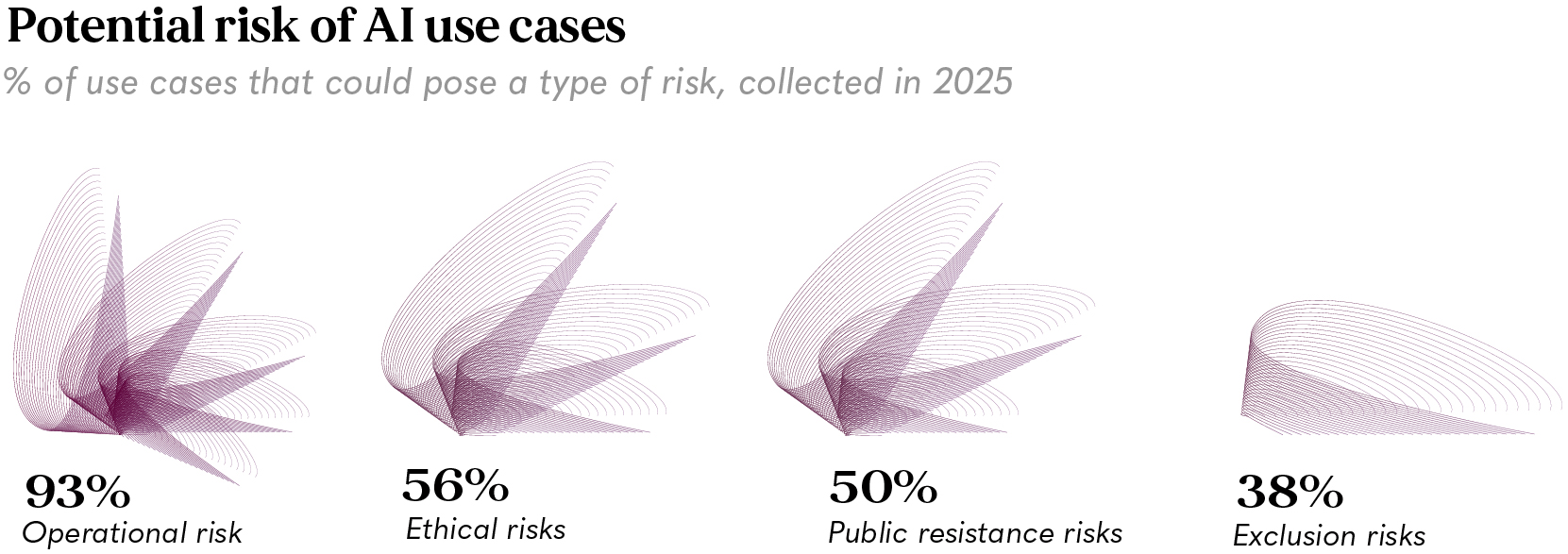

3. The Risk Landscape

The universality of operational risk — present in 93% of all cases — reflects a structural reality: government AI systems operate at scale, on sensitive data, and with legal consequences. A private app crashing affects one user. A government algorithm misfiring can affect millions, often the most vulnerable.

The universality of operational risk — present in 93% of all cases — reflects a structural reality: goThe universality of operational risk — present in 93% of all cases — reflects a structural reality: government AI systems operate at scale, on sensitive data, and with legal consequences. A private app crashing affects one user. A government algorithm misfiring can affect millions, often the most vulnerable. The OECD documents two cases that have become the field’s defining cautionary tales:

- 🇦🇺 Australia — Robodebt: 470,000 incorrect debt notices were issued without human verification, ultimately ruled unlawful

- 🇳🇱 Netherlands — Toeslagenaffaire: 26,000 families were wrongfully accused of benefits fraud, driven by a bias that disproportionately targeted people with dual nationalities or migrant backgrounds

Both failures share the same root cause: the absence of meaningful human oversight at the point where automated decisions intersected with citizens’ lives.

4. Stuck in Pilot Mode

Beyond the risks, there is also a structural issue: only 40% of EU public-sector AI use cases are fully implemented.

The gap isn’t technical — pilots are often the preferred entry point for governments exploring AI adoption. They allow institutions to experiment, build internal familiarity, and demonstrate innovation while postponing some of the more difficult organizational shifts that large-scale deployment requires.

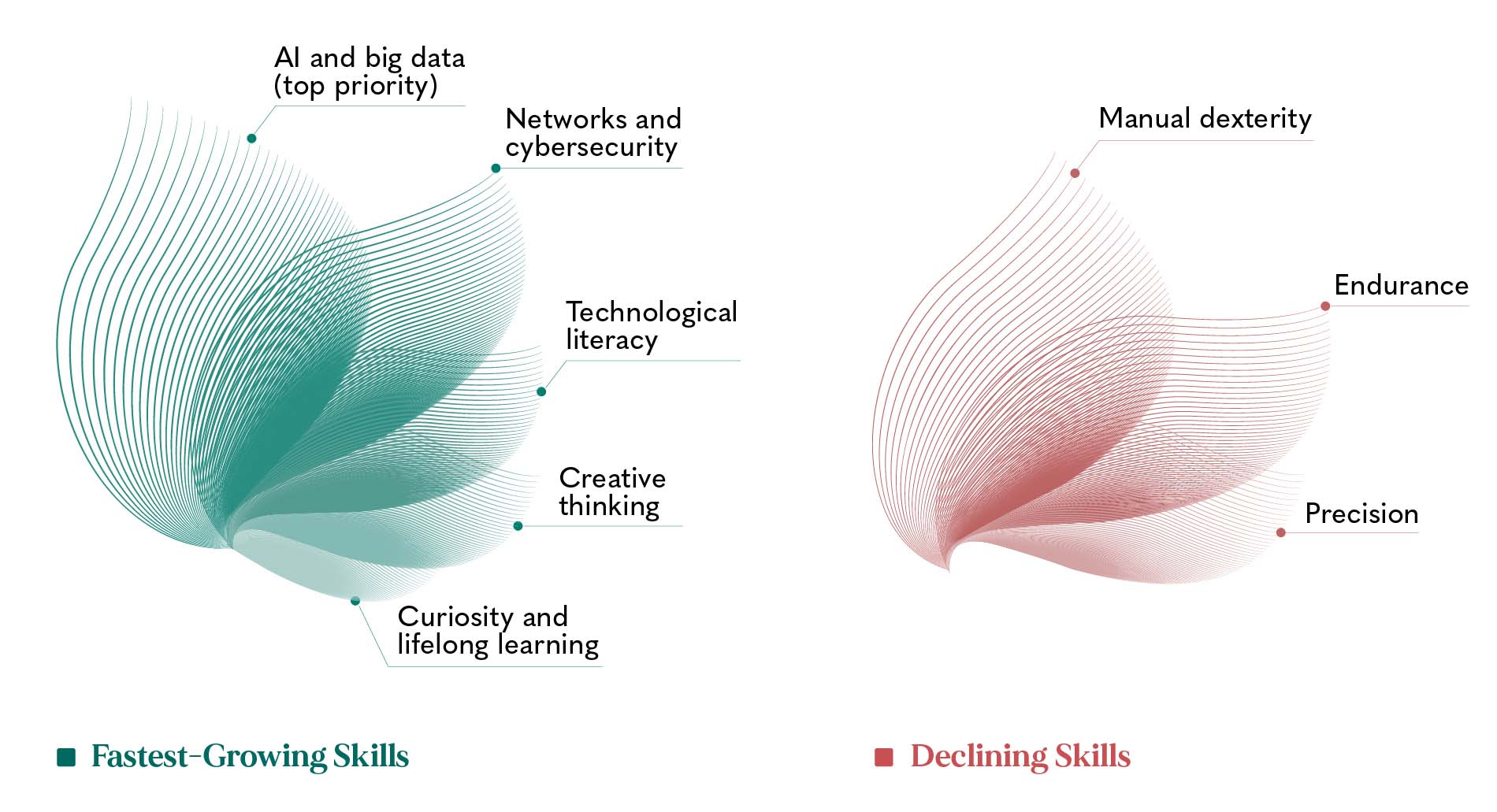

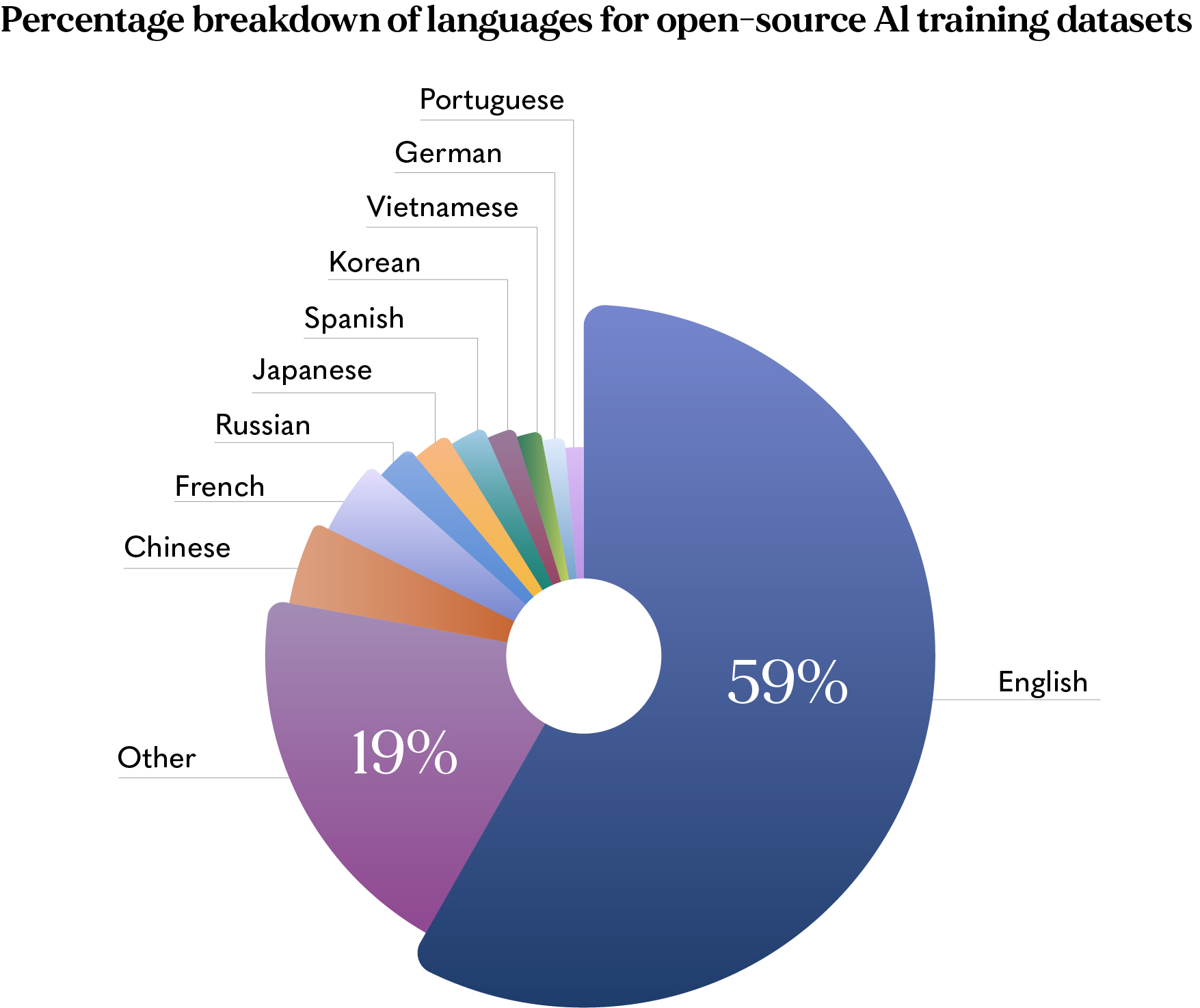

The barriers are well-documented. Skills gaps, data silos, and risk aversion are consistently cited across countries. But the most underappreciated blocker may be linguistic — and it is one the OECD data makes visible in a striking way:

Governments aiming to deploy AI that genuinely serves all their citizens are building on a foundation that structurally disadvantages non-English speakers. The communities that most need effective, accessible public services are often the least represented in the data that trains the tools designed to serve them.

5. AI in Action — Selected Real-World Cases

Governments that have moved past the pilot phase are delivering concrete, measurable results. A few cases show what this looks like in practice:

- 🇦🇷 Argentina — Prometea: AI in the Buenos Aires justice system cuts sentencing drafting from 1 hour to 10 minutes, freeing prosecutors to focus on case strategy rather than document production

- 🇧🇷 Brazil — AI Litigation Project: Tax courts group similar appeal cases algorithmically, dramatically reducing backlogs — a function where case volume had long outpaced human capacity

- 🇧🇪 Belgium: AI predicts road slipperiness and pre-positions de-icing resources before accidents happen — shifting government from reactive to anticipatory

- 🇨🇦 Canada — Alberta: A wildfire prediction system using historical and ecological data helps emergency services deploy resources before fires spread

- 🇪🇺 EU Parliament: An AI search tool opens 20 years and 38,000 motions of parliamentary documents to citizens and lawmakers in seconds

What these cases share is not the technology — it’s the approach. In each one, AI is handling the volume and pattern-recognition work that would otherwise consume human time, while decisions, judgment, and accountability remain with people. That alignment between what machines do well and what humans must retain is precisely what the OECD identifies as the condition for successful, trustworthy adoption.

6. The Bottom Line

Government AI is real, widespread, and consequential. But it is mostly stuck in a phase of isolated experiments that do not scale, built on infrastructure that is unevenly developed, trained on data that is overwhelmingly English-language, and deployed by workforces that have not been prepared for it. The gap between what AI could deliver for public services and what governments are actually achieving is enormous.

Closing it requires not just technical capacity, but governance architecture, trust-building, and multilingual investment — alongside the political will to define what good outcomes look like and then actually measure them.

Field Notes

Governance & AI – A Conversation

Governments worldwide aren’t just adopting AI — they’re revealing, through every procurement decision, regulatory gap, and accountability failure, what kind of societies they actually want to build. To understand what’s truly at stake, we spoke with three leading voices working at the intersection of AI, governance, and human rights.

Featured conversations:

- Vilas Dhar — President of the Patrick J. McGovern Foundation, AI scientist, and former member of the United Nations Secretary-General’s High-Level Advisory Body on AI — on what’s truly at stake in deploying AI in governance of our societies.

- Lawyer and governance expert Arthur Sidney on procurement, institutional authority, and why having an AI framework on paper is never enough — and what Africa is getting right that the West isn’t.

- Michael Santoro — business ethicist, human rights scholar, and senior fellow at the Markkula Center for Applied Ethics at Santa Clara University in Silicon Valley — on where human oversight should actually sit in AI systems, why our current tools for measuring harm are broken, and what genuine accountability would require.

Economic Watch

Governing the Governors: At What Cost?

- AI governance is shifting from a discretionary investment to a market imperative, driven by regulatory pressure and the growing financial cost of ungoverned AI deployment.

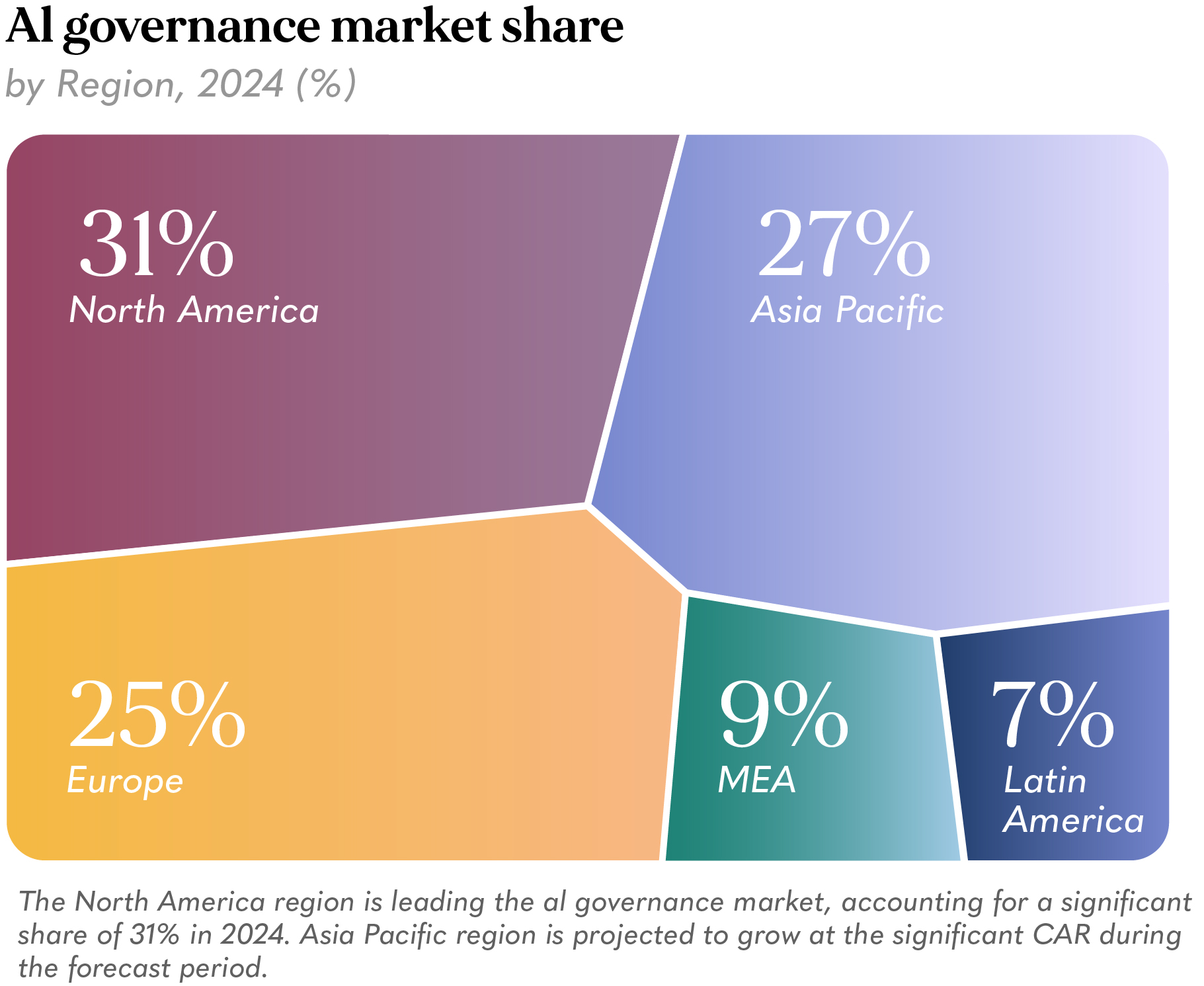

- The global AI governance market stood at $227 million in 2024 and is projected to reach $4.8 billion by 2034 — a nearly 36% annual growth rate.

- North America leads today, but the geography is actively shifting: Europe through legislation, Asia-Pacific through scale.

Source: Precedence Research

As AI deployment accelerates across governments and industries, a parallel industry is emerging to manage its risks. The AI governance market — spanning bias detection tools, model monitoring platforms, explainability frameworks, and compliance tracking systems — is expanding at a pace that reflects both the scale of AI adoption and the growing recognition of what happens when it goes wrong. The fastest-growing functional segment is risk management and compliance — precisely the capability most relevant to governments deploying AI at scale.

Geographically, the picture mirrors the broader dynamics of AI adoption. North America leads on market share, anchored by a dense technology ecosystem and early enterprise adoption. Europe‘s 25% share is shaped less by volume than by regulatory architecture: the EU AI Act is making compliance spending a structural inevitability for any organization operating in European markets. Asia-Pacific, at 27%, is the region to watch — governments in Singapore, South Korea, and China are accelerating governance frameworks in step with their AI deployment, and the region is projected to grow at the fastest rate through the decade.

Reading this market data alongside the OECD findings raises an uncomfortable question: if governance tools are being built and bought primarily where AI is already strongest, who is protecting the regions that need protection most? The countries most underrepresented in training data, most dependent on systems built elsewhere, and with the weakest institutional infrastructure to challenge them are also the smallest slices of this market. A governance industry that grows fastest where AI is already dominant may widen, rather than close, the gap between those who shape these systems and those who are shaped by them.

The big picture

Who Will Run the State in 2050?

Beneath the changes in the way governments choose to govern their citizens, there are deeper transformations actively changing the composition and needs of these societies themselves.

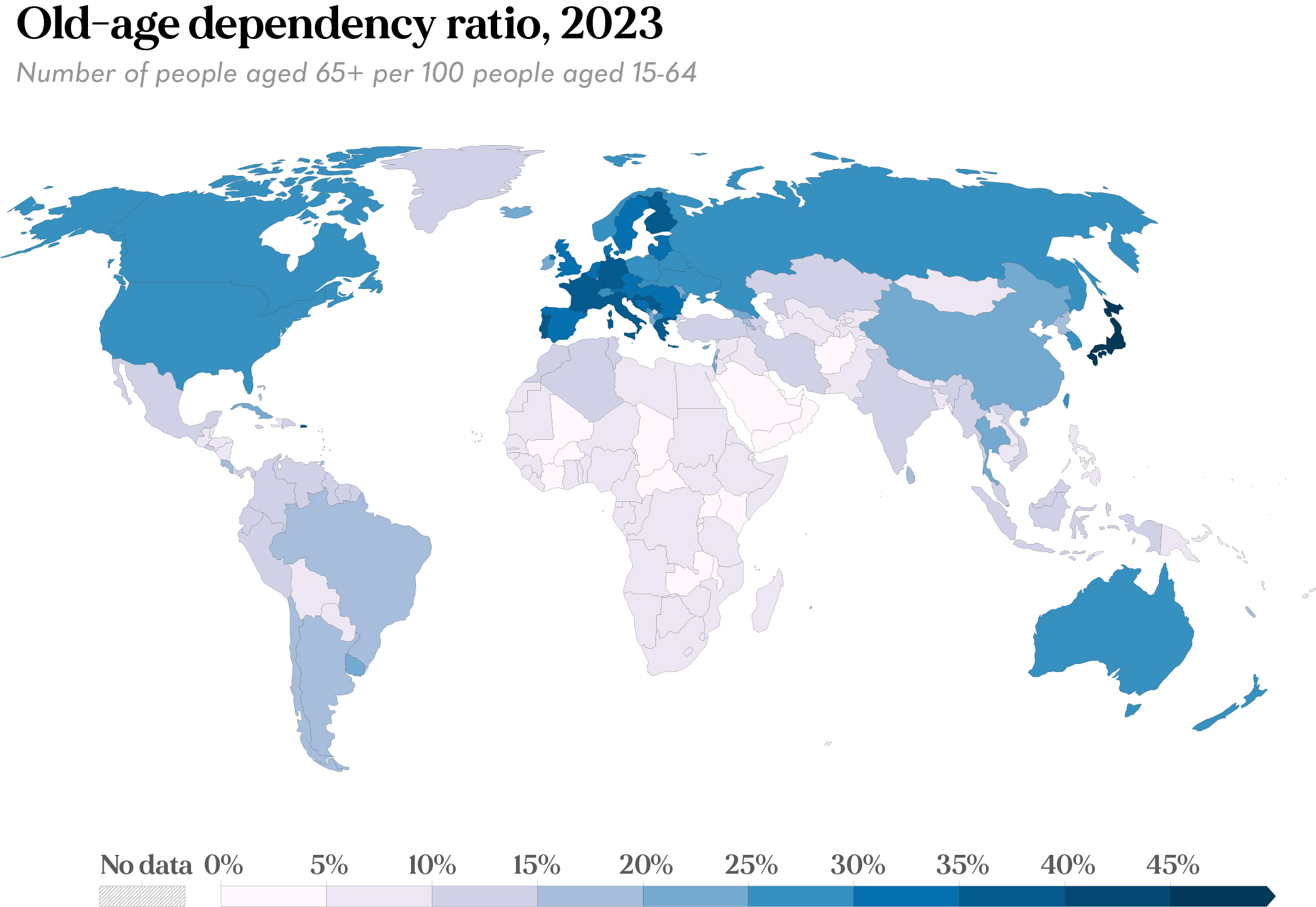

Across advanced economies, there is one demographic phenomenon specifically that is forcing governments to act: ageing populations are pushing public systems to their limits. More healthcare, more pensions, fewer workers to fund them. AI is increasingly the answer governments are reaching for — not as an innovation agenda, but as a structural response to a fiscal pressure that compounds every year.

Whether the governance to make that response trustworthy will keep pace — and whether it will work equally well for societies aging fast and for those that haven’t started yet — is what this moment is actually about.

Source: Our World in Data

Demographic change is forcing governments to act. Across advanced economies, ageing populations are pushing public systems to their limits: more healthcare, more pensions, fewer workers to fund them. AI is increasingly the answer governments are reaching for — not as an innovation agenda, but as a structural response to a fiscal pressure that compounds every year. Whether the governance to make that response trustworthy will keep pace — and whether it will work equally well for societies ageing fast and for those that haven’t started yet — is what this moment is actually about.

The numbers make the pressure concrete. The EU is projected to face population decline within the next decade. Japan already has two working-age people for every person over 65. South Korea‘s birth rate stands at 0.75 — a demographic half-life of roughly 20 years. As old-age dependency ratios rise, ageing-related spending on pensions, healthcare, and long-term care increases precisely as the tax base contracts. The European Central Bank (ECB) has flagged the risk directly: without significant productivity gains, deteriorating growth and rising sovereign debt concerns could undermine financial stability across advanced economies. AI is increasingly that productivity offset.

Taiwan makes this logic concrete. It became an “aged society” in 2018 and reached “super-aged” status in 2025 — a transition that took seven years, compared to 11 in Japan, 19 in Italy, and 36 in Germany. Its response draws directly on its strengths in semiconductors and digital infrastructure: assistive robotics, telemedicine, AI-powered cognitive tools for older adults, and a Long-Term Care 3.0 Plan launching in 2026 that builds community-based “10-minute care circles” integrating AI-assisted healthcare with human caregiving. Technology and social policy designed together, not bolted onto each other.

The Global South faces a different version of the same pressure. Low old-age dependency ratios today mask a large, economically inactive young population and institutions that are already stretched. These societies will age too — and faster than advanced economies did, without the decades of gradual adjustment that Europe had. They will need AI deployed in governance, but not the same AI and not on the same terms.

Demographic pressure is a shared global condition with radically different timelines. Advanced economies are running out of workers. Developing economies are running out of time to build the institutions that will govern them. The tools they need will not be the same.

We’ve gathered the signals—now it’s time to understand what they really mean.

Connecting the dots

What Does All This Mean?

AI is already part of the picture, and irreversibly so. What is not inevitable is how it gets applied. And that distinction matters more than almost anything else being discussed. In governments, AI exists and is being deployed — but far less radically and extensively than the headlines suggest: the conversation is still dominated by efficiency and optimisation. The harder question — how AI can open new opportunities for citizens, markets, and institutions — remains constrained by the fears underneath it.

Those fears are not irrational. What cases like Robodebt in Australia and Toeslagenaffaire in the Netherlands made visible is that the risks of getting this wrong are not limited, manageable, or contained. They are systemic. Deployment at that scale requires a structural transformation of public institutions that most governments are not yet equipped to deliver.

That gap is also, predictably, a market. Risk management is the engine driving the AI governance industry — a market set to expand significantly across regions, bringing with it real opportunities for technological and industrial development. Furthermore, advanced economies are facing a demographic winter. Ageing populations, shrinking workforces, mounting pressure on public finances and social systems – all pressures AI can help mitigate.

But the solutions that work in the Global North are not automatically the solutions the Global South needs. The pressures are different, and the tools must be too. This is the larger intuition the issue points toward: AI systems are not just technologies. They are becoming civic infrastructure and a collective responsibility; the pencil with which we sketch the kind of society we want. And that is precisely why they are locally situated. The way we need to be governed is local. The categories through which institutions see us are local. The language public systems speak — and the assumptions embedded inside them — are local too. And so too must be the technologies shaping our lives.